-

📂 Journal

“ai” is the worst thing to happen to society.

“ai” is the worst thing to happen to society. Removing more and more people from working, whilst making cancerous billionaires richer, is only ever going to result in greater and greater inequality. Everything else is parlour tricks. There is pretty much nothing good to come from any of this crap being peddled as “The greatest…

-

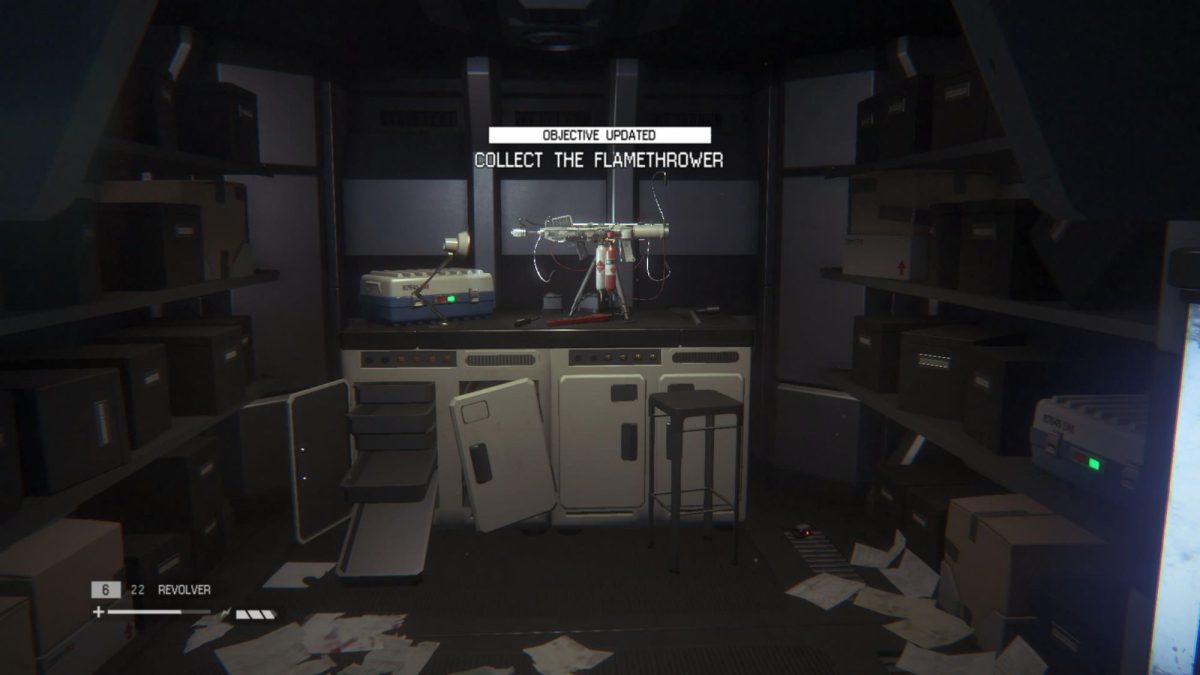

Collect the Flamethrower

Like mother, like daughter. Big girl shit about to go down.

-

The Space Jockey

Never thought I’d get to visit this location from the original Alien Film. Incredible vibes and a scene almost identical to that of said film.

-

📂 Notes

Its 2am here and my tax code changed half an hour ago. I shit you not. So until April 2026 ill be about £200 worse off There are much bigger things going on in the world than Dave’s tax code. But i felt i needed to write at least something to mark the occasion. Plus…

-

📂 Notes

My website (this website) does not track you in any way. I have even disabled access logging in my server config. Until today I did use Matomo, which was self-hosted. But then I came to the conclusion that I just don’t need it. At the same time I both care about the people who visit…

-

📂 Notes

Those early Elton John albums really are incredible. May need to make this week an Elton John Discography week.

-

📂 Notes

What follows is a comment I wrote on Link-Din to a future software developer looking for advice: Have fun first. Don’t choose some technology because people / job specs tell you that you should use it. Explore different languages. Build little projects in those different languages. Build your own personal website and blog about your…

-

📂 Notes

Could “Stereotomy” by “The Alan Parsons Project” be one of the greatest albums ever recorded? I think so. The title track alone is incredible. And the closing refrain of the album being a condensed version of the same song – bringing the album back full circle is just top tier.

-

📂 Notes

Instead of deporting migrants, can we just deport all the racists instead please? They can take their flags for warmth.

-

📂 Notes

The one thing keeping me from migrating from WordPress to ClassicPress is the excellent ActivityPub plugin. Its great, but i wish i had the time to port all the broken parts to the ClassicPress way of doing things i.e. the WP things that have changed since 4.9

-

📂 Notes

Why the heck do I still go on Link-Din? I think im literally only there incase i need any connections for work in the future. And now ive found myself replying to the abundance of AI bullshit on there. Who am I even trying to convince.

-

📂 Notes

Spooky month is upon us 👻 From: https://infosec.exchange/@catsalad/115297363682497652

I’m in the process of re-designing my website, using WordPress’ block site editor.