Programming

Linux, Laravel, PHP. My notes and mini-guides regarding development-related things.

-

Setting up a Digital Ocean droplet for a Lupo website with Terraform

Overview of this guide My Terraform Repository used in this guide Terraform is a program that enables you to set up all of your cloud-based infrastructure with configuration files. This is opposed to the traditional way of logging into a cloud provider’s dashboard and manually clicking buttons and setting up things yourself. This is known…

-

Beyond Aliases — define your development workflow with custom bash scripts

Being a Linux user for just over 10 years now, I can’t imagine my life with my aliases. Aliases help with removing the repetition of commonly-used commands on a system. For example, here’s some of my own that I use with the Laravel framework: You can set these in your ~/.bashrc file. See mine in…

-

Setting up a GPG Key with git to sign your commits

Signing your git commits with GPG is really easy to set up and I’m always surprised by how many developers I meet that don’t do this. Of course it’s not required to push commits and has no baring on quality of code. But that green verified message next to your commits does feel good. Essentially…

Tagged: Linux

-

How I use vimwiki in neovim

This post is currently in-progress, and is more of a brain-dump right now. But I like to share as often as I can otherwise I’d never share anything 🙂 Please view the official Vimwiki Github repository for up-to-date details of Vimwiki usage and installation. This page just documents my own processes at the time. Installation…

-

General plugins I use in Neovim

I define a “general plugin” as a plugin that I use regardless of the filetype I’m editing. These will add extra functionality for enhancing my Neovim experience. I use Which-key for displaying keybindings as I type them. For example if I press my <leader> key and wait a few milliseconds, it will display all keybindings…

-

Passive plugins I use in Neovim

These plugins I use in Neovim are ones I consider “passive”. That is, they just sit there doing their thing in the background to enhance my development experience. Generally they wont offer extra keybindings or commands I will use day to day. You can view all the plugins I use in my plugins.lua file in…

-

How I use Neovim

I try to use Neovim for as much development-related work as possible. This page serves as a point of reference for me, and other people interested, for what I use and how I use it. Feedback is welcome and would love to know how you use Neovim too! My complete Neovim configuration files can be…

-

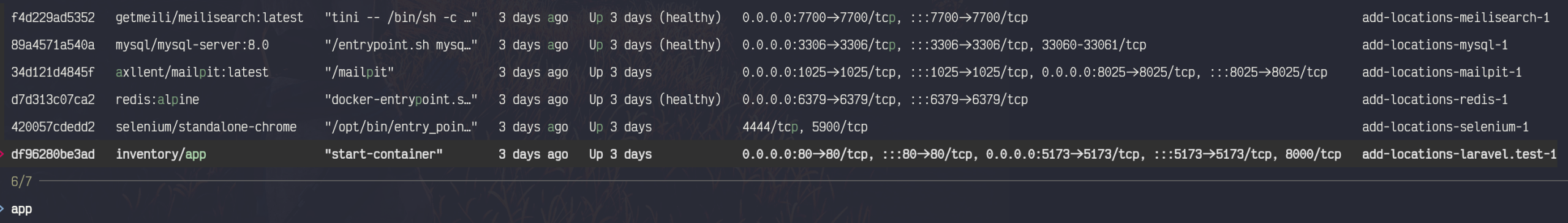

Inventory app — saving inventory items.

This is the absolute bare bones minimum implementation for my inventory keeping: saving items to my inventory list. Super simple, but meant only as an example of how I’d work when working on an API. Here are the changes made to my Inventory Manager. Those changes include the test and logic for the initial index…

Tagged: PHP

-

Connecting to a VPN in Arch Linux with nmcli

nmcli is the command line tool for interacting with NetworkManager. For work I sometimes need to connect to a vpn using an .ovpn (openvpn) file. This method should work for other vpn types (I’ve only used openvpn) Installing the tools All three of the required programs are available via the official Arch repositories. Importing the…

-

Installing and setting up github cli

What is the github cli The Github CLI tool is the official Github terminal tool for interacting with your github account, as well as any open source projects hosted on Github. I’ve only just begun looking into it but am already trying to make it part of my personal development flow. Installation You can see…

-

Adding Laravel Jetstream to a fresh Laravel project

I only have this post here as there was a couple of extra steps I made after regular installation, which I wanted to keep a note of. Here are the changes made to my Inventory Manager. Follow the Jetstream Installation guide Firstly I just follow the official installation guide. When it came to running the…

-

How I organize my Neovim configuration

The entry point for my Neovim Configuration is the init.lua file. Init.lua My entrypoint file simply requires three other files: The user.plugins file is where I’m using Packer to require plugins for my configuration. I will be writing other posts around some of the plugins I use soon. The user.options file is where I set…